gen ai - UX Case Study

UX Case Study

UX Case Study

UX Case Study

UX Case Study

Model Migration as a Lifecycle Problem

Amazon Bedrock, 2026

Enabling enterprises to migrate models without losing trust, quality, or control

PROBLEM OVERVIEW

Amazon Bedrock is a managed GenAI platform used by enterprises running production AI systems in regulated, customer-facing environments. The central challenge is that foundation models evolve rapidly, but enterprise expectations for stability do not.

PROBLEM OVERVIEW

Amazon Bedrock is a managed GenAI platform used by enterprises running production AI systems in regulated, customer-facing environments. The central challenge is that foundation models evolve rapidly, but enterprise expectations for stability do not.

Problem Overview

Amazon Bedrock is a managed GenAI platform used by enterprises running production AI systems in regulated, customer-facing environments. The central challenge is that foundation models evolve rapidly, but enterprise expectations for stability do not.

Model upgrades change behavior with Gen AI in subtle ways. Prompts migrated across models or providers often underperform and require extensive re-optimization. For large organizations, “upgrade” becomes a major operational project, not a quick configuration change.

One customer spent 5400+ engineering hours on a single migration.

Model upgrades change behavior in subtle ways. Prompts migrated across models or providers often underperform and require extensive re-optimization. For large organizations, “upgrade” becomes a major operational project, not a quick configuration change.

Model upgrades change behavior in subtle ways. Prompts migrated across models or providers often underperform and require extensive re-optimization. For large organizations, “upgrade” becomes a major operational project, not a quick configuration change.

Executive summary

I was the lead UX designer responsible for the end-to-end model migration experience in Amazon Bedrock. My scope spanned prompt optimization, evaluation workflows, and the system that enables customers to safely change foundation models in production. That puts this work in a specific category: the operator layer of AI systems, not the agent itself — the interfaces that make autonomous behavior visible, controllable, and safe to act on.

The hardest decisions were not about UI polish. They were about judgment and sequencing. To ship a viable first release, my team agreed to defer centralized data management, schema flexibility, and expanded observability so we could validate the core migration lifecycle with real customers.

The result was not a one-click migration. It was a controlled, repeatable process that gave customers confidence to evaluate change, manage risk, and establish clear ownership over AI decisions.

Design thesis and principles

DESIGN THESIS

AI systems and agents are only trustworthy when change is explicit.

This work treated model migration as a change-management problem, not an optimization exercise. The primary failure modes designed against were silent behavior change, inefficient token utilization, and unexplainable shifts in output quality.

PRINCIPLES THAT SHAPED EVERY DECISION

- Make change visible: what changed, when, and relative to what baseline

- Bound risk by default: prevent production exposure until baselines are understood

- Constrain automation to evidence: automate analysis and comparisons, not decisions

- Human-in-the-loop: humans remain responsible for approval and deployment

- Enable safe exit: stop, pause, or abandon without irreversible impact

Who I designed for

Model migration sits between system reliability and response quality. I designed for two primary personas.

BEDROCK DEVELOPER

Primary focus

Building reliable AI applications in production.

Responsibilities

- Application performance under real user inputs

- Token usage and cost management

- Handling large documents and context limits

- Stability during upgrades

Needs

- Clear performance and cost comparisons

- Confidence that behavior will not regress

- Safe rollout paths to production

PROMPT ENGINEER

Primary focus

Getting the best possible responses from the model.

Responsibilities

- Prompt design and iteration

- Quality evaluation across models

- Working within token and instruction limits

- Balancing detail with response flexibility

Needs

- Side-by-side output comparisons

- Fast testing workflows

- Evidence that quality improves, not degrades

Shared Constraints

Both personas operate within model limits, balance cost and performance, and need validation before committing to a model change.

The system, not the screens

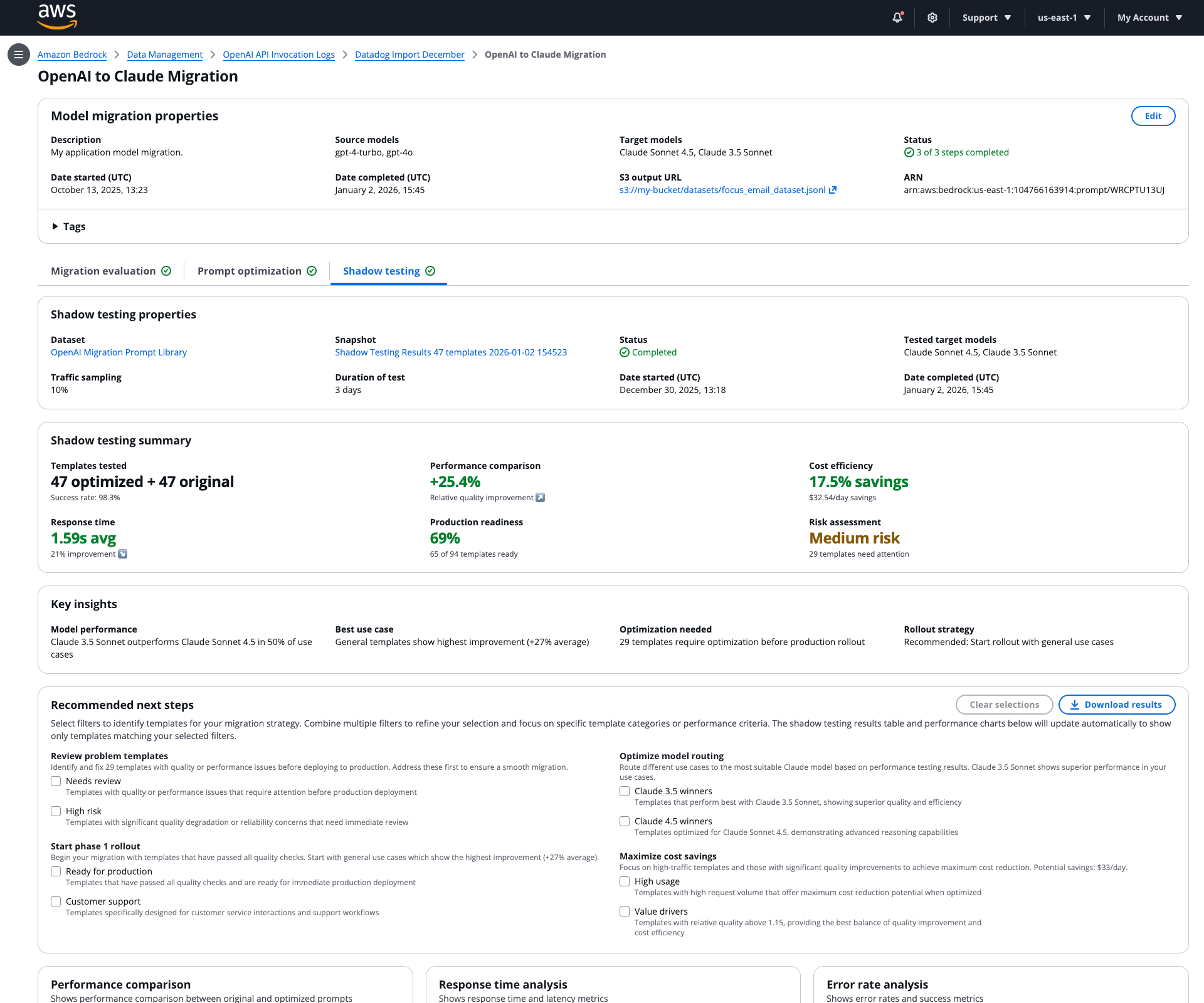

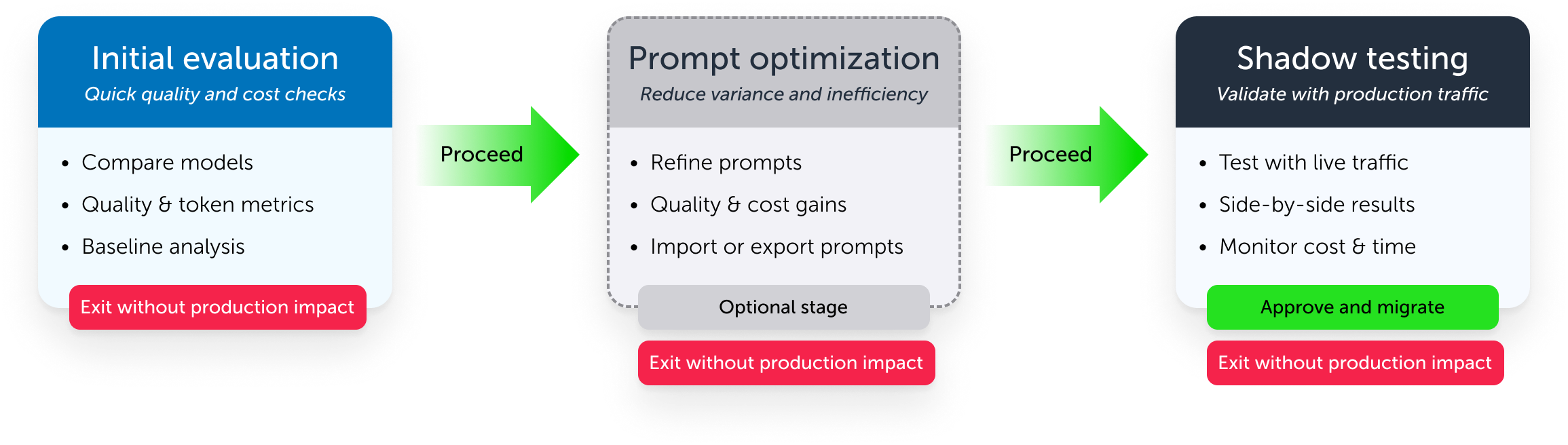

Model migration was designed as a decision lifecycle, not a workflow. The lifecycle consisted of three stages that progressively increased confidence while making cost and risk legible.

GUARDRAILS DESIGNED INTO EVERY STAGE

- Users can exit without irreversible impact

- Dynamic and integrated cost calculation

- If an error or failur occurs, stages can be retried

- Mandatory evaluation acts as a guardrail against premature production exposure

- The process keeps responsibility explicit: the system provides evidence, the user decides

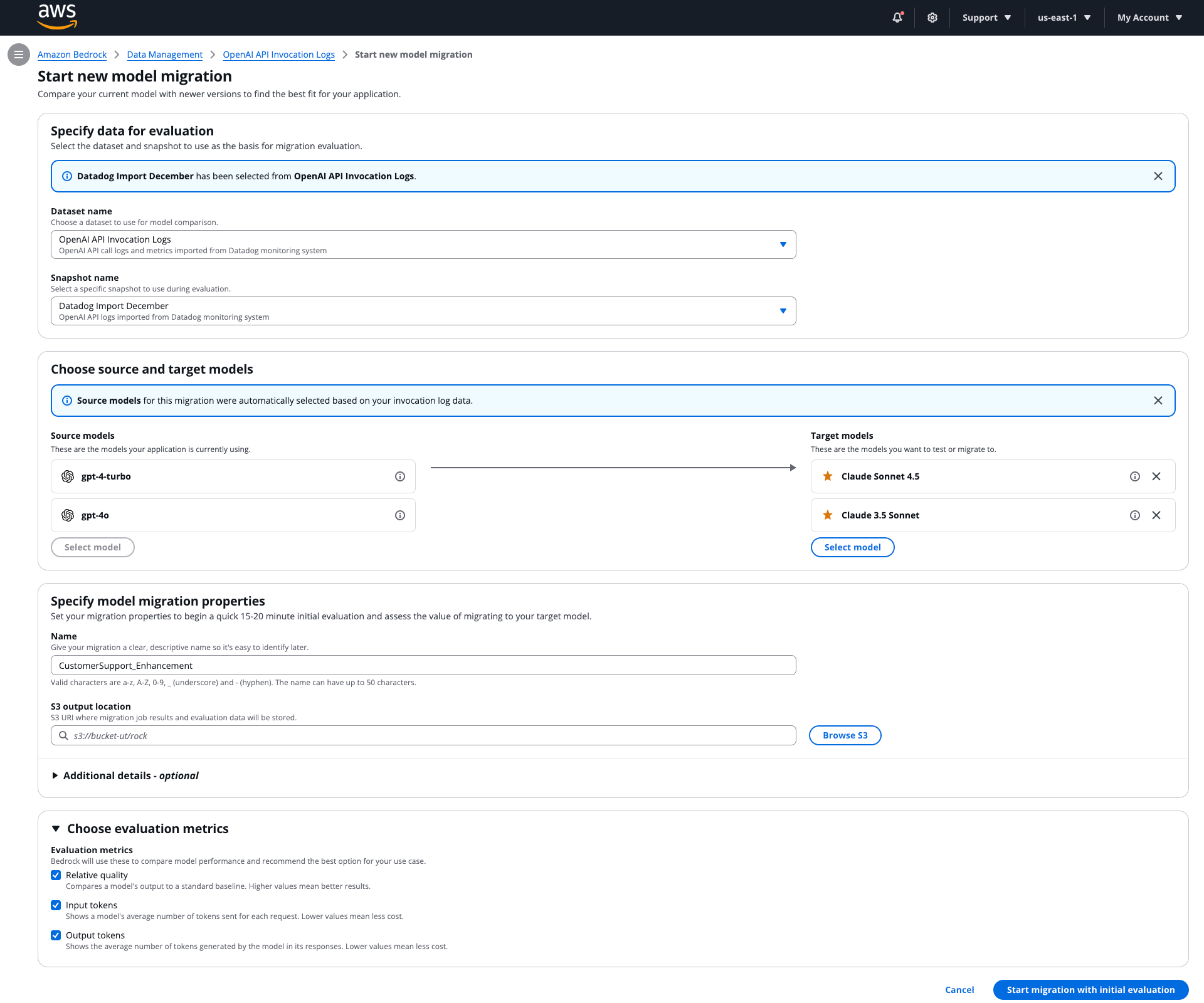

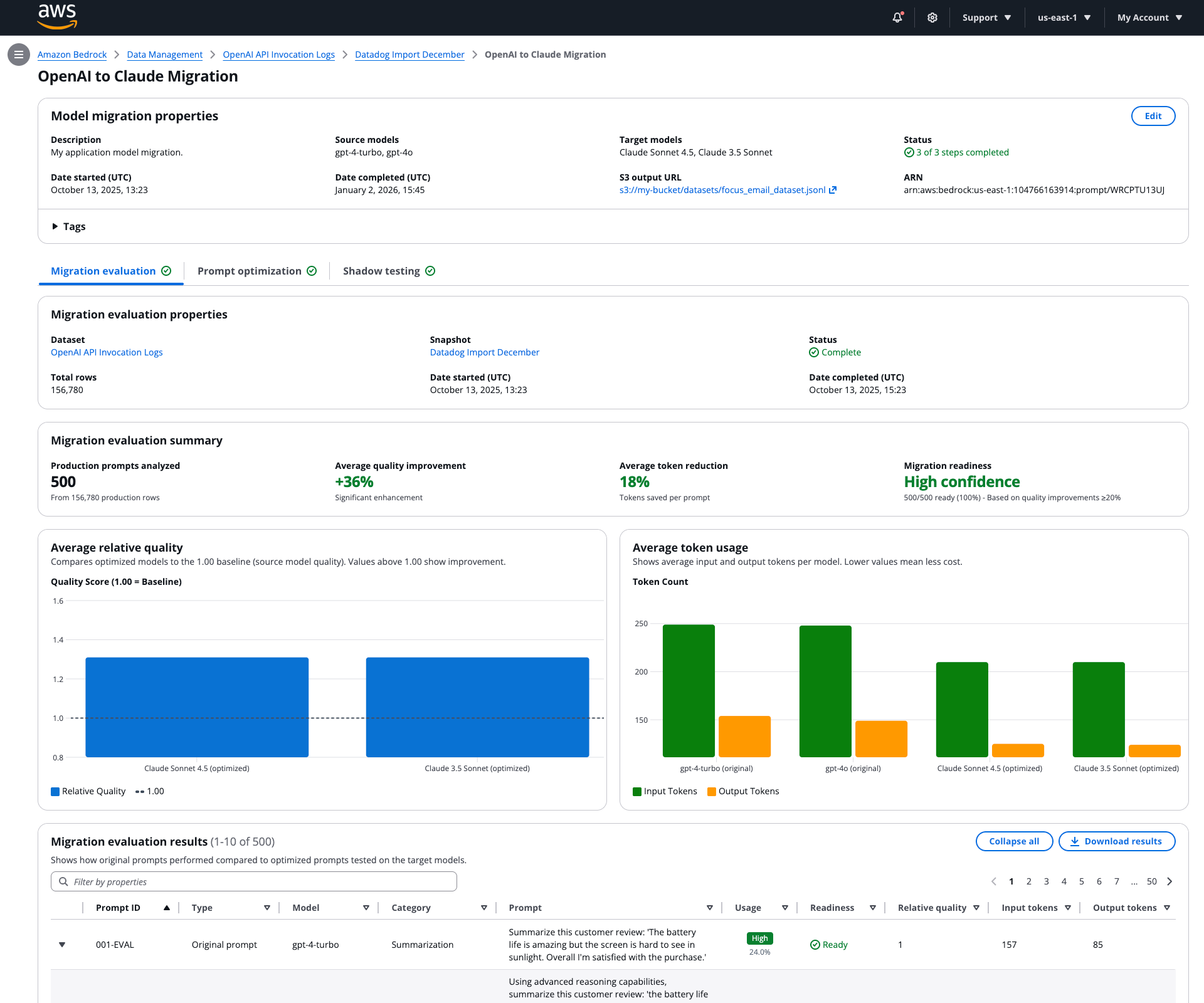

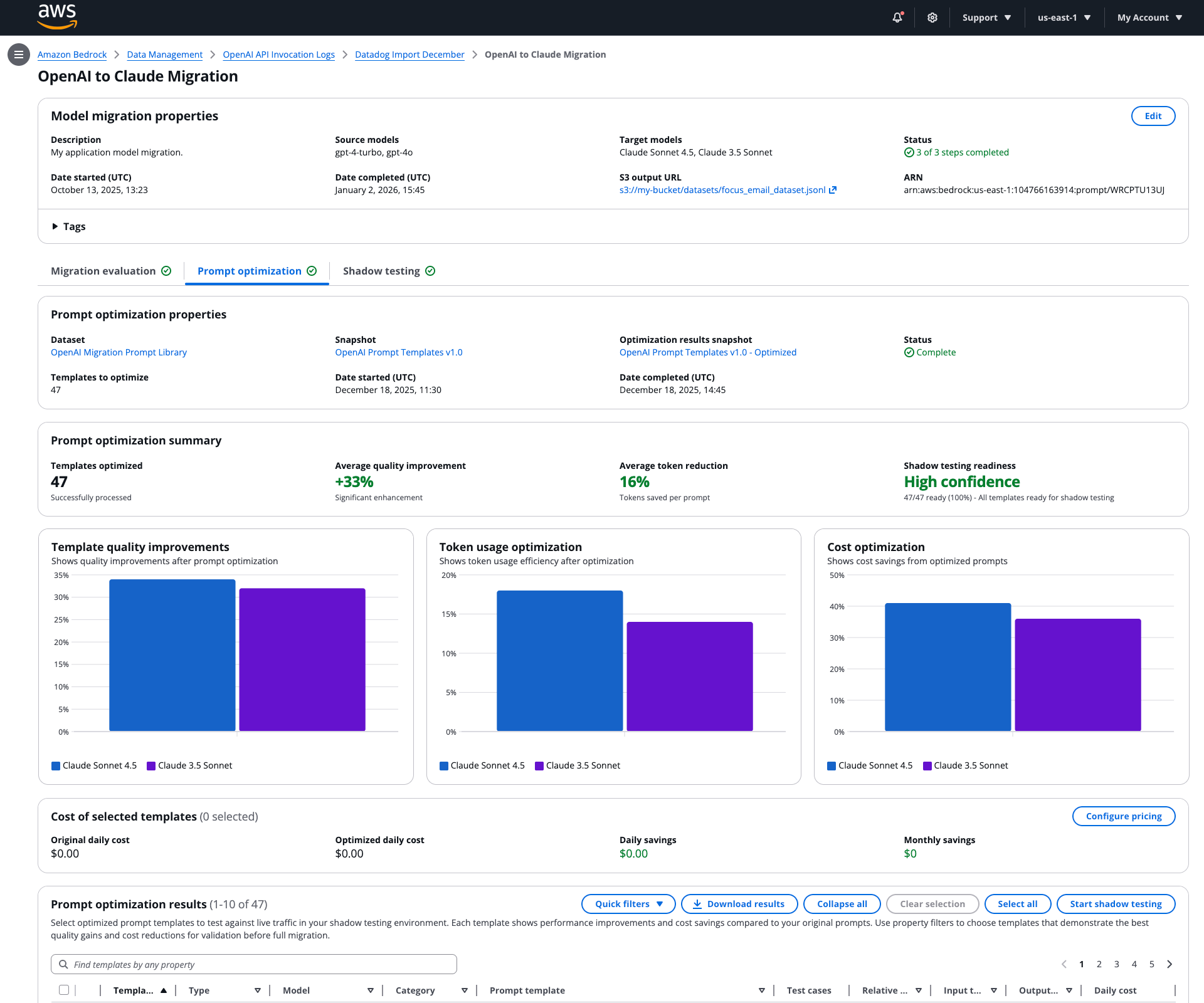

Stage 1: Initial evaluation

After my users provide their application's invocation log data (a log of what an AI did and what the result was) for evaluation. The system performs an Initial Evaluation to compare their current and selected target models on quality and token utilization before. This stage acts as a guardrail as a quick decision point if model migration is worth moving forward.

WHAT USERS NEED HERE

- Fast signal on relative quality and token use

- Clear baselines and comparison metrics

- A decision point: proceed or stop

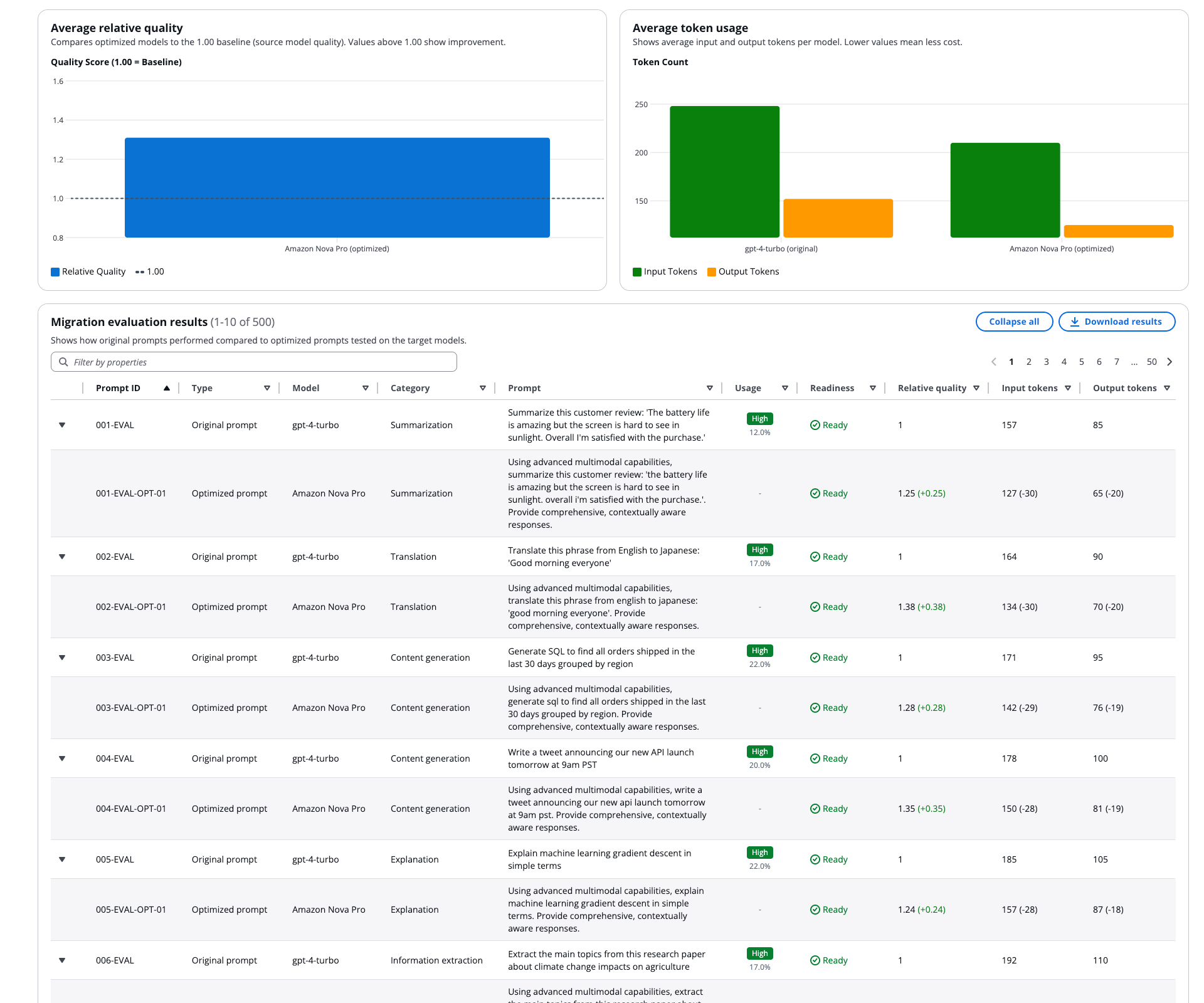

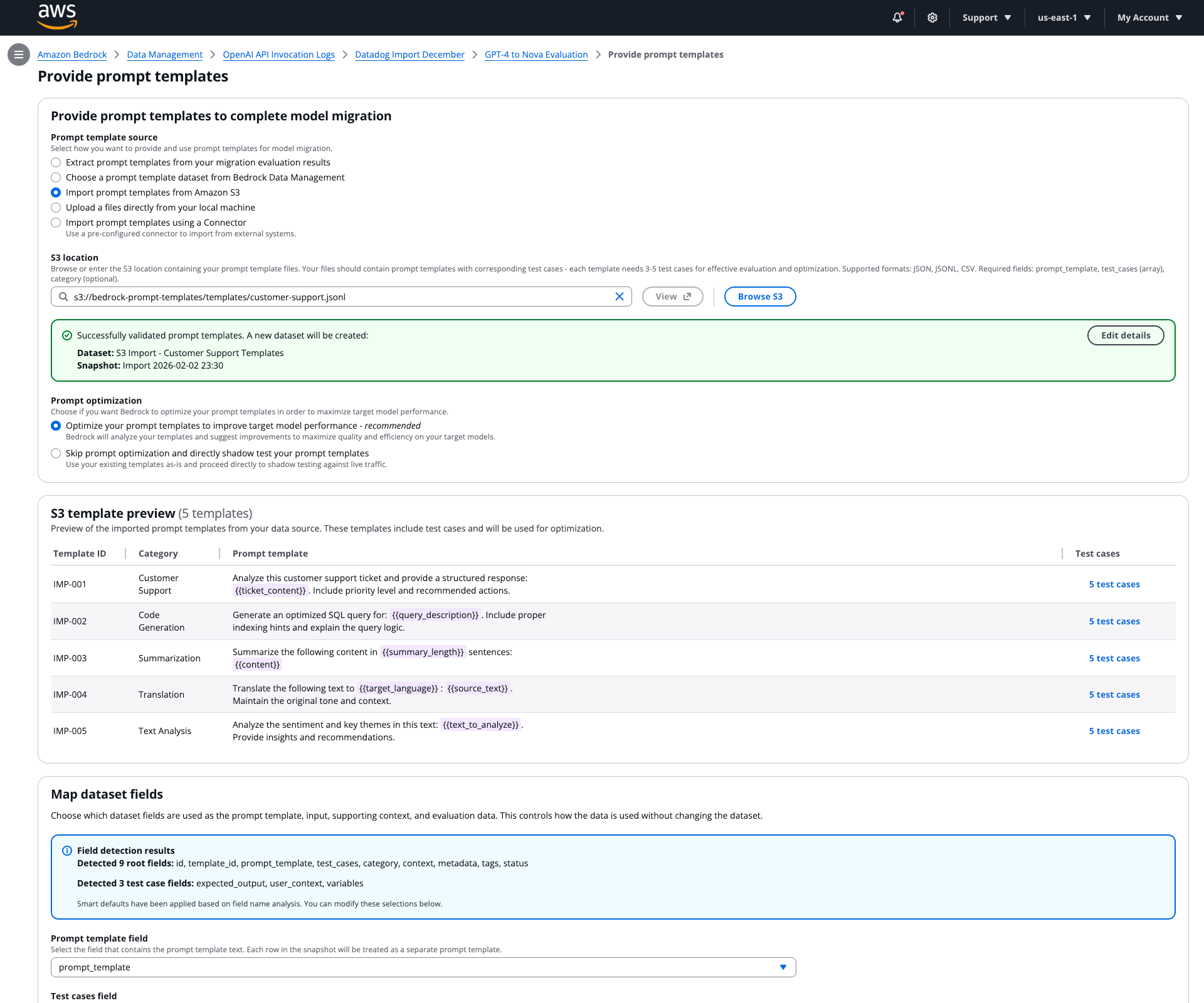

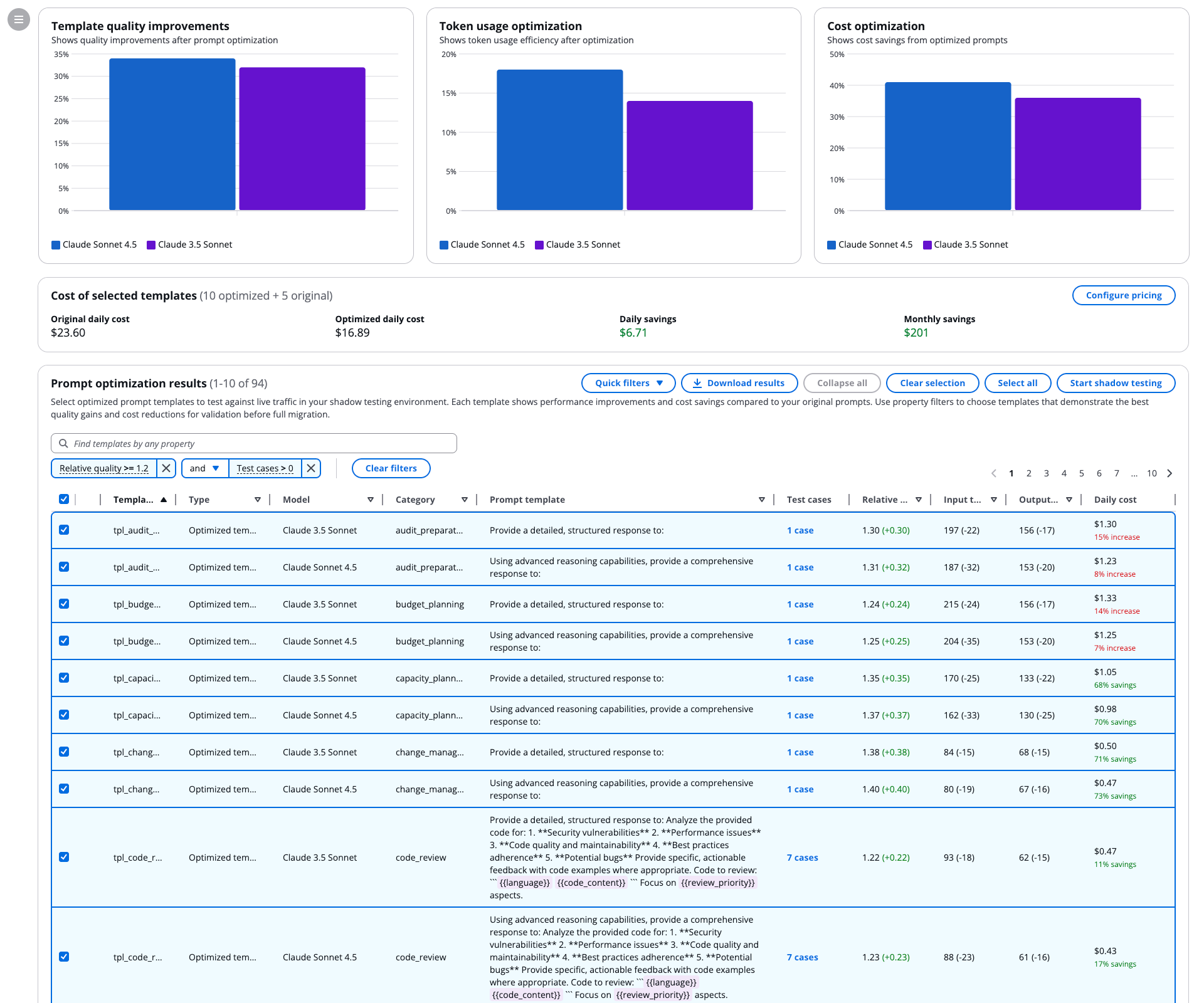

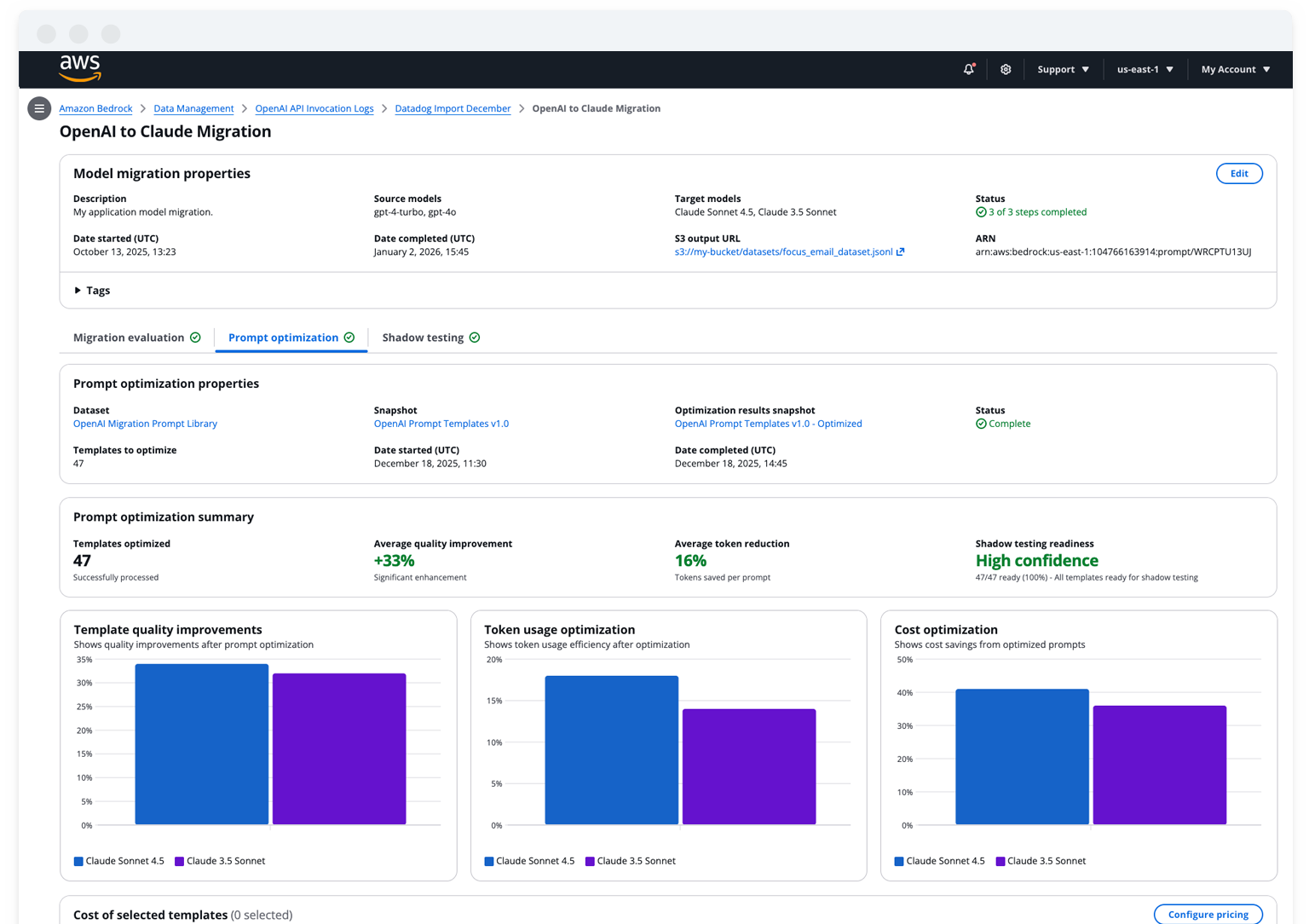

Stage 2: Prompt optimization

My users have a variety of methods to provide prompt templates to the system. They can use our system to perform prompt optimization, or skip directly to shadow testing.

The goal of this stage is to improve relative quality and cost efficiency before deeper investment. Optimization is a lever, not a requirement, and should not block teams that want to evaluate with their own methods.

WHAT USERS NEED HERE

- A clear promise: what optimization can and cannot do

- Visibility into which prompts improved and by how much

- The ability to import results if they optimize externally

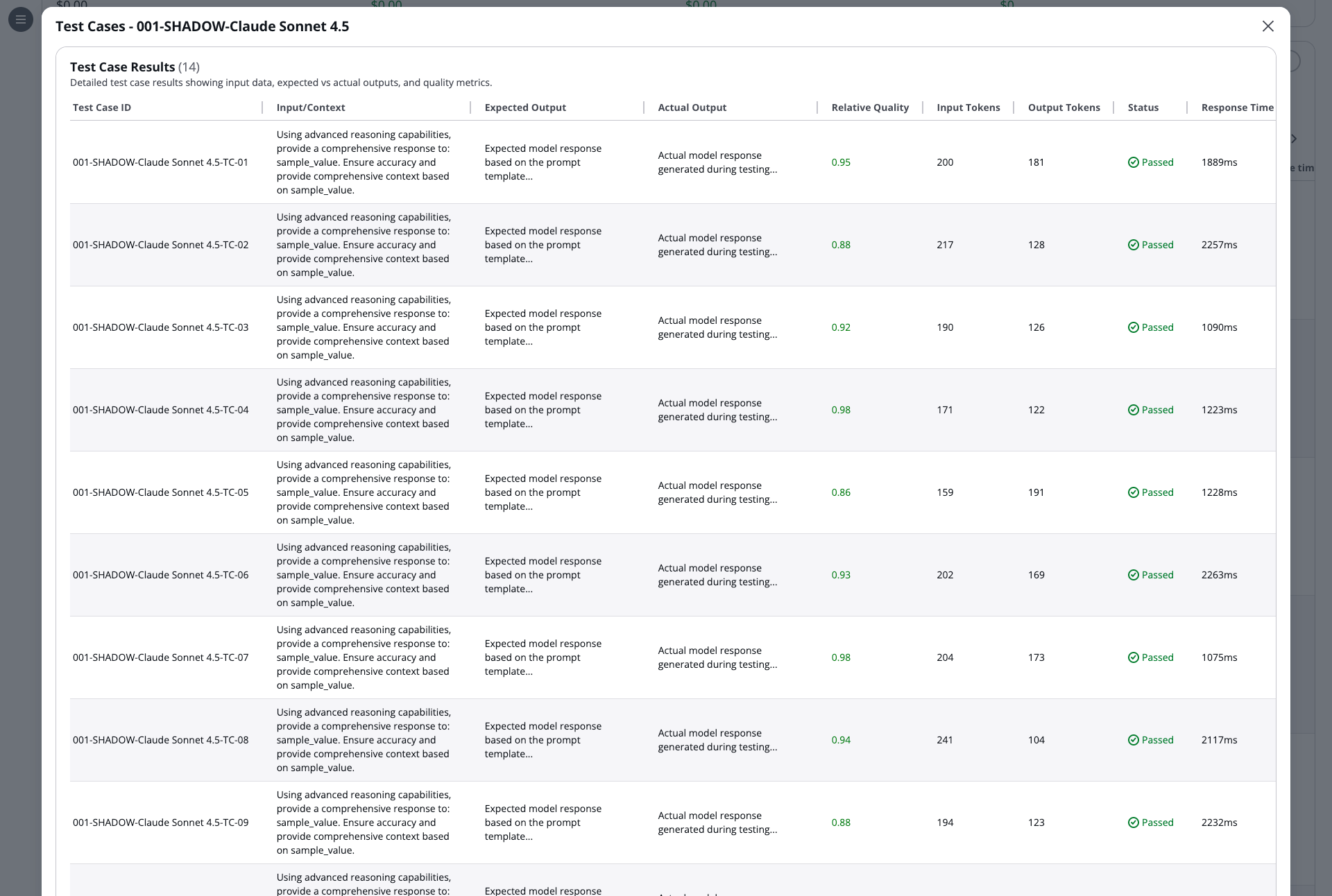

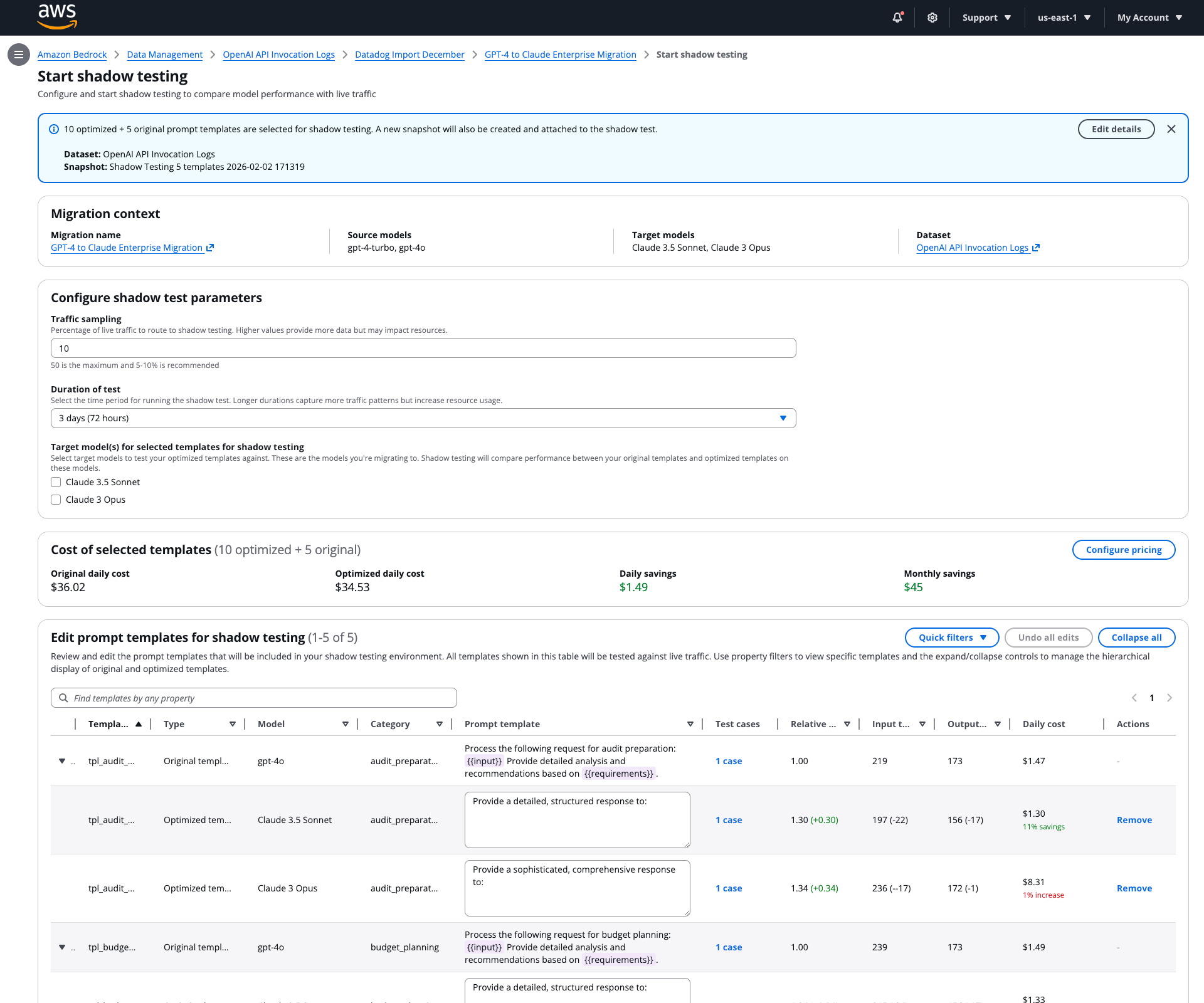

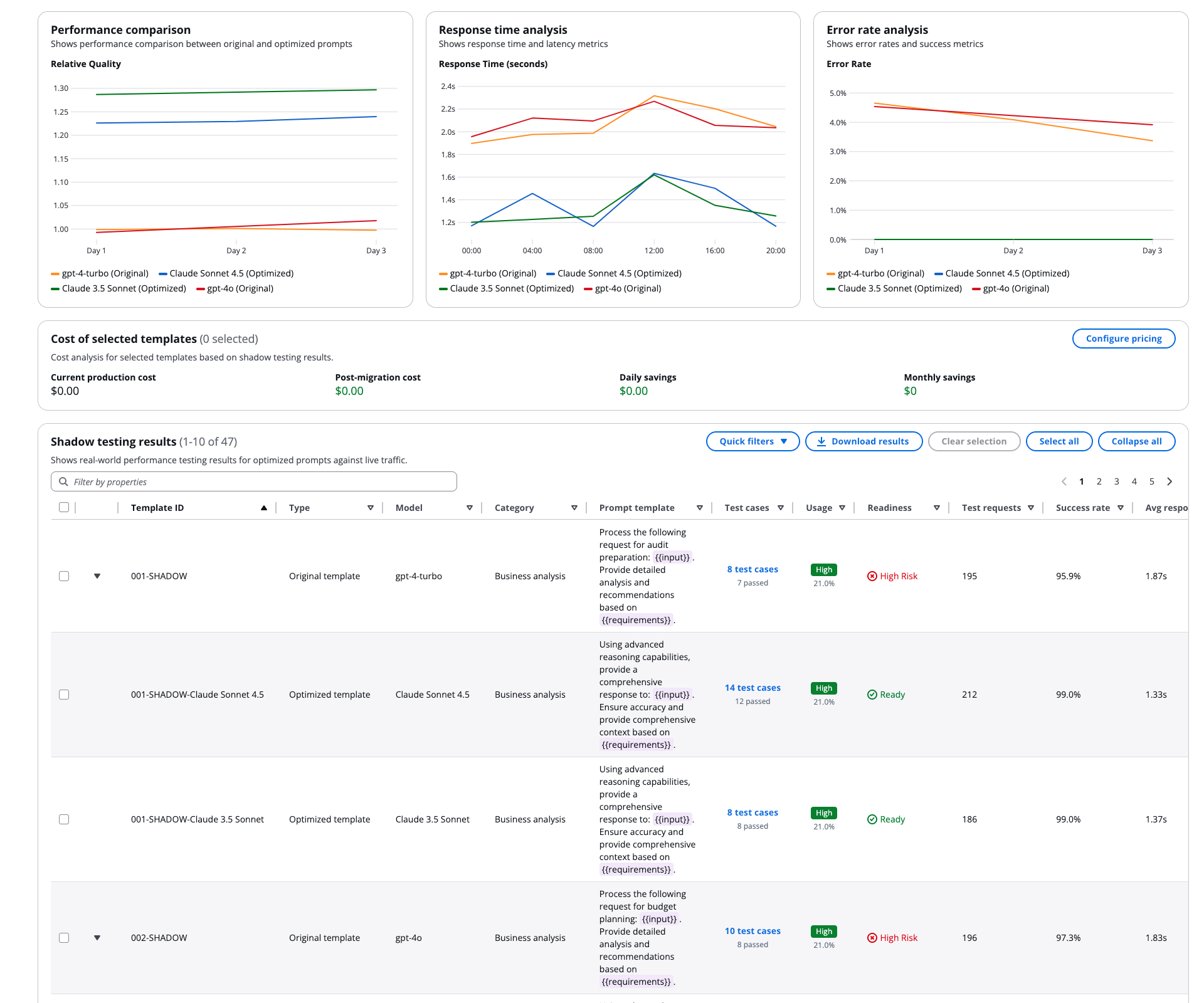

Stage 3: Shadow testing

After providing optimized prompt templates and test data, users configure a traffic sample and duration to begin shadow testing. It produces production-like evidence without production impact.

WHAT USERS NEED HERE

- A controlled time window and clear cost expectations

- Streaming results so they can stop early

- Side-by-side diffs for outputs and key metrics

Tradeoffs, challenges, and decisions

Shipping a trustworthy lifecycle required deliberate scope cuts. Centralized data management, schema flexibility, and expanded observability were intentionally deprioritized to validate the migration lifecycle itself with real customers.

WHAT WAS DEFERRED AND WHY

- Expanded data management: required separate infrastructure; deferred to protect core delivery

- Schema flexibility: added evaluation complexity; scoped to a future release

- Retries and non-linear branching: needed additional resourcing; scoped to a future release

Impact and what this enabled

This work transformed model migration from a fragile, manual effort into a controlled, repeatable process suitable for production GenAI systems.

CUSTOMER-LEVEL IMPACT

- Faster confidence in whether an upgrade is worth pursuing

- Safer experimentation without exposing production traffic prematurely

- Clearer expectations around token utilization and cost

- Stronger control over decision-making and ownership

PLATFORM-LEVEL IMPACT

A lifecycle foundation that unlocks future investments in:

Data management

Observability

Governance and policy controls

Standardized baselines and comparisons

CLOSING PRINCIPLE

Enterprise AI succeeds when change is visible, risk is bounded, and accountability is unavoidable.

Portfolio

AWS Glue StudioDesigning a configuration-driven data pipeline builder for enterprise scale

Career exploration for Workday's Career HubDesigning AI-assisted career exploration with human judgment at the center

Live Ops Alerting DashboardDesigning clarity for real-time operational decision-making

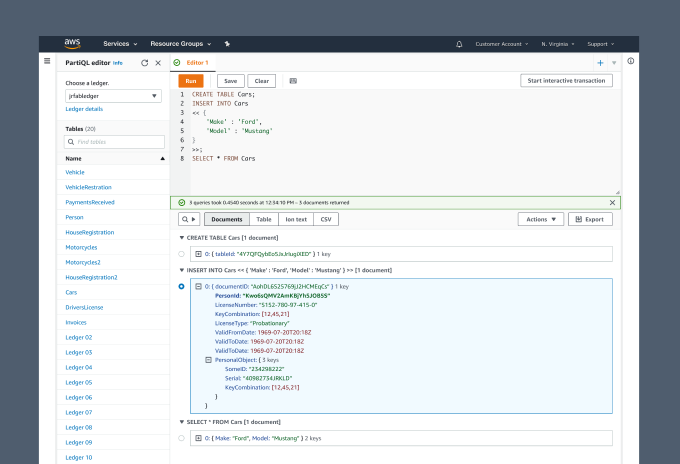

PartiQL Editor for Amazon QLDBUX Case Study

Asurion Virtual AgentUI Design

Enhanced chat for Chase mobileUI-UX design

UI for James Bond 007: World of espionageDesign system

Chase mobileUI-UX Design

Transaction details for Chase mobileUX Case study

Upgrade systems for Rival FireUX case study